... and the Future of Ranking Model Architecture

Don’t want to read a wall of text? Check out the audio & video overview - Thanks to Notebook LM :)

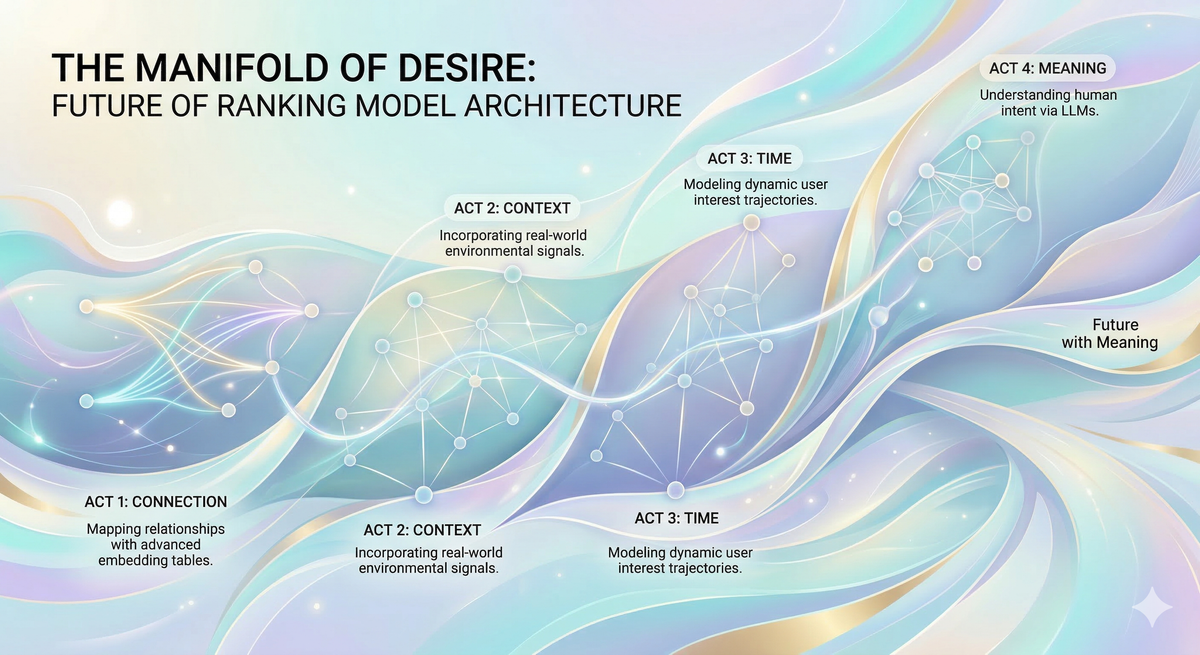

If Large Language Models (LLMs) are the engines that have mastered human knowledge and reasoning encoded in language, Recommender Systems (RecSys) are the cartographers of human desire. Beneath the noise of our digital behaviors—every scroll, click, and swipe—lies a hidden, structured geometry: the manifold of human preference. As an AI Research Product Manager & Full-Stack Builder at Meta, I experienced a professional identity crisis triggered by the recent dominance of LLMs: are traditional recommendation engines becoming obsolete? This post resolves that tension, deconstructing the four-act architectural evolution of RecSys to explain why mapping the human psyche requires a fundamentally different engine than mapping human language.

The Core Thesis

The Manifold Hypothesis is the theoretical justification for why ML models even work at all. It suggests that while real-world data appears high-dimensional and noisy, it actually lives on structured, low-dimensional surfaces called manifolds. The job of an ML model is to act as a cartographer: to find this hidden structure and learn its shape.

This post posits that the evolution of RecSys is a journey of unlocking the "Human Preference" manifold, one dimension at a time:

- Act 1: Connection: Mapping the lines of identity between users and content.

- Act 2: Context: Layering in the environmental texture of the "here and now."

- Act 3: Time: Capturing the sequential "Arrow of Time" and user momentum.

- Act 4: Meaning: Breaking the semantic bottleneck to understand human intent.

This lens not only resolves architectural debates like Sparse Net vs. Dense Net, Sequence Arch vs. Interaction Arch, but also define our path forward: a successful next-generation architecture must be a unified engine, one capable of hosting all the dimensions of the human preferences manifold simultaneously.

Act 1: The Discovery of Connection

In the late 2000s and early 2010s, recommender systems were primarily tools for catalog navigation. The defining products of this era were Netflix (in its DVD-by-mail days) and Amazon. These systems relied almost entirely on explicit signals—users were asked to do the heavy lifting:

- “Rate this movie from 1 to 5 stars.”

- “Give this product a Thumbs Up.”

- “Tell us you ‘Own’ this item.”

Because these signals were so clear, the mathematical challenge was straightforward: reconstruct the “ratings”. The $1M Netflix Competition gave rise to Matrix Factorization (MF), which viewed the world as a two-dimensional grid of User IDs and Content IDs.

However, The classic MF paradigm has 2 bottlenecks:

- The Scarcity Wall: the rating data is extremely sparse as most users don’t want to rate every video they watch or every product they browse, leaving the user-item matrix 99.9% empty.

- Blindness to Contextual Signals: MF ignores rich contextual information, like time, location, device, or session that shapes users’ real-world preferences: what you want on a Monday morning (a cup of coffee) may differ from Friday night (a massage chair). This led to “one-size-fits-all” recommendations.

Act 2: The Texture of Context

By the mid-2010s, the Big Data era had arrived and this decimated the Scarcity Wall from the previous era. Every scroll, hover, and "accidental" click was captured in massive Hadoop clusters and processed by engines like Spark and BigQuery.This shift fundamentally changed the "currency" of RecSys: we transitioned from Explicit Feedback to Implicit Behavior.

But implicit data is a double-edged sword: it’s abundant, but low-fidelity. A click might just be an accident or a user looking for comments. Even explicit signals like "Likes" or "Shares" became noisy; they indicate an action but hide the "why." A share doesn't tell the model if the content was exceptionally high-quality or simply outrageous. We gained volume, but lost clarity on intent.

The Solution: Contextual De-noising

To separate the signal from the noise, we began feeding the model massive amounts of contextual information, such as the time of day, device type, or user’s location. The foundational logic is that an action observed in isolation is ambiguous, but the same action observed within a rich contextual frame becomes informative. Imagine a user clicks on a high-end luxury watch.

- The Noisy Signal: Without context, the model thinks: "They want to buy a $10,000 watch."

- The Contextual Truth: If the model sees the user is on a budget Android device at 2:00 AM on a slow 3G connection, it realizes the click wasn't purchase intent - it was bored window shopping. Context allows the model to "de-noise" the intent.

Architectural Breakthroughs: Wide & Deep, SparseNN/DLRM

In 2016, Google published the Wide & Deep paper in 2016, and Meta followed with SparseNN/DLRM in 2019. They successfully fused the “memorization” component from the previous era, with new “generalization” component, on hybrid hardware:

- The "Wide" Part (Memorization): Massive CPU-hosted embedding tables that memorized specific connections of (User X, Item Y,) and static attributes like Age or City. It was the system’s "encyclopedia" of known relationships.

- The "Deep" Part (Generalization): The GPU-powered "neural brain" (MLP) that learned to reason. Instead of just remembering, it generalized patterns across billions of "maybe-clicks" to understand how environmental factors—like a rainy day in New York vs. a sunny one in Tokyo—should shift the recommendation.

For the first time, we have a Context Aware Recommender System (CARS). And the growing availability of GPUs eventually led us to the next era of RecSys.

Act 3: The Journey of Time

For years, RecSys viewed user history as a "Bag of Interests", blind to the reality that user preferences are non-stationary: they drift and pivot due to life stages, seasonal changes, or "satiety": the point where you've consumed enough of a topic to become bored with it.

The Solution: Temporal Momentum

The 2017 publication of the Transformer paper, coupled with the surge in high-end GPU availability at Meta around 2022, unlocked a dimension previously too expensive to compute: Time.

New architectures, such as HSTU (Hierarchical Sequential Transduction Units), elevated the specific order of events to a first-class feature. By leveraging the Attention mechanism, these models can process a user’s entire interaction history in milliseconds to detect the direction and momentum of their interests.

We finally moved from taking a snapshot to watching the movie. The model now understands that your interests haven't disappeared; they have evolved. Consider the "Skiing Journey":

- Last Month: Researching gear and comparing jacket brands.

- Last Week: Made purchases.

- Today: Seeking "Beginner Tutorials."

- Next Month: Graduating to "Advanced Carving Techniques."

A "Bag of Interests" model would keep trying to show you the ski jacket you already bought. A sequential model recognizes you have moved to the next chapter of your journey.

Act 4: The Future with Meaning

I personally believe The next frontier is the Semantic Dimension, as we have reached a Semantic Bottleneck: while we already capture a vast amount of high-intent data, our models remain apprentices at understanding what a user actually means (intent).

To break this wall, we need to integrate the LLM’s inherent ability to reason across text and multi-modal content, turning our existing high-intent signals into a primary steering wheel for the engine. This will unlock the gold mine in the high-intent signals we already have today:

- Explicit Intent: Features like "Dear Algo" on Instagram and Threads, where users describe exactly what they want to see in natural language.

- Search & Query History: Incorporating search patterns - the purest expression of curiosity & intent - directly into the RecSys engine.

- Cross-Modal Intelligence: Harmonizing 3rd-party ads data with our internal signals to create a unified, multi-dimensional user profile.

- Content Deep-Sensing: Truly "watching" the video and "reading" the comments alongside the user to grasp the cultural context and nuance of every interaction.

Strategic Implications

Learning #1: Dimensions are Orthogonal, Not Optional

The "Human Preference" manifold exists at the intersection of every dimension we’ve unlocked. A successful architecture must hold the entire manifold simultaneously, integrating Behavior, Context, Time, and Meaning.

This lens explains the heated architectural debates we’ve seen. The tensions, Sparse net vs. Dense net or Sequence arch vs. Interaction arch, weren't just academic; they were the growing pains of model architecture trying to host a "larger party" of various dimensions.

Learning #2. A Two-Fork Strategy Forward

We currently stand at a critical transition point. The co-engagement, contextual, and temporal signals are all present in the ranking model architecture, but they have not yet reached a state of full, fluid interaction. The path forward should follow a Two-Fork Strategy:

- Deep Integration: Refining our existing model arch to ensure the Contextual and Temporal dimensions are perfectly fused without compute bloat.

- Semantic Expansion: Building the bridge to the "Future with Meaning," where LLM-like understanding/reasoning turns high-intent signals into the primary steering wheel of the recommendation engine.

Learning #3. Moving Beyond the "LLM Identity Crisis"

With the rise of LLMs, I personally have felt an identity crisis, wondering if my knowledge about RecSys is becoming obsolete. But through this thought exercise, I’ve realized that RecSys is mapping fundamentally different manifolds.

- As Demis Hassabis often explains, AlphaFold was designed to model the "energy landscape", the underlying physics of how proteins fold.

- LLMs are designed to model the manifold of human knowledge and reasoning as encoded in language.

- RecSys are different. Its "Job to be Done" is to map the manifold of the human psyche, the intricate, shifting landscape of individual preferences, social identities, and temporal journeys.

Even the most advanced LLM doesn't invalidate the fundamental dimensions we have spent decades mastering:

- Connection: The social truth that we are influenced by what our peers enjoy.

- Context: The environmental truth that our needs change based on where we are and what time it is.

- Time: The biological truth that human interest is a journey of evolution.

Whether we fold these dimensions into an LLM or bring LLM-reasoning into our existing architectures, the mission of RecSys remains unique: it's building a multidimensional map of human desire.